Twitter haters beware. Steven Berlin Johnson’s Time cover story, How Twitter Will Change the Way We Live, says Twitter’s key elements — the follower structure, link-sharing, real-time searching — are here to stay. And every major channel of information will be “Twitterfied” in one way or another in the coming years. Then there’s this:

When we talk about innovation and global competitiveness, we tend to fall back on the easy metric of patents and Ph.D.s. It turns out the U.S. share of both has been in steady decline since peaking in the early ’70s. (In 1970, more than 50% of the world’s graduate degrees in science and engineering were issued by U.S. universities.) Since the mid-’80s, a long progression of doomsayers have warned that our declining market share in the patents-and-Ph.D.s business augurs dark times for American innovation. The specific threats have changed. It was the Japanese who would destroy us in the ’80s; now it’s China and India.

But what actually happened to American innovation during that period? We came up with America Online, Netscape, Amazon, Google, Blogger, Wikipedia, Craigslist, TiVo, Netflix, eBay, the iPod and iPhone, Xbox, Facebook and Twitter itself. Sure, we didn’t build the Prius or the Wii, but if you measure global innovation in terms of actual lifestyle-changing hit products and not just grad students, the U.S. has been lapping the field for the past 20 years.

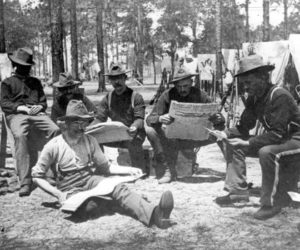

Read the full article. His expectation of how we’ll get our news, search for information and the future of advertising is spot on. Here’s the backstory on the Time cover image.

In other Twitter news… What does science have to say?

[T]wo studies suggest information overload can trigger the brain’s ‘fight or flight’ response – and sideline more compassionate, thoughtful responses to news and information.

One of the new studies suggests rapid fire news updates and instant social interaction are too fast for the ‘moral compass’ of the brain to process.

It also suggests that heavy Twitter and Facebook users could become ‘indifferent to human suffering’ because they never get time to reflect and fully experience emotions about other people’s feelings. […]

The second study at the University of California, San Diego, showed that universal traits of human wisdom – such as compassion, empathy and altruism – are hard-wired into brains.

The brain cells dealing with those emotions are found in the pre-frontal cortex – the slower acting part of the brain that is sidelined when the world is stressful.

Well, golly, where have we heard that before? I’ll see you those two studies and raise you this one…

Designing Choreographies for the “New Economy of Attention:”

The nature of the academic lecture has changed with the introduction of wi-fi and cellular technologies. Interacting with personal screens during a lecture or other live event has become commonplace and, as a result, the economy of attention that defines these situations has changed. Is it possible to pay attention when sending a text message or surfing the web? For that matter, does distraction always detract from the learning that takes place in these environments? In this article, we ask questions concerning the texture and shape of this emerging economy of attention. We do not take a position on the efficiency of new technologies for delivering educational content or their efficacy of competing for users’ time and attention. Instead, we argue that the emerging social media provide new methods for choreographing attention in line with the performative conventions of any given situation. Rather than banning laptops and phones from the lecture hall and the classroom, we aim to ask what precisely they have on offer for these settings understood as performative sites, as well as for a culture that equates individual attentional behavior with intellectual and moral aptitude.

That via Howard Rheingold, who is also quoted in Twitter Goes to College from US News.